Published: 27 January 2026

The Spanish Data Protection Agency (AEPD) has released a new guidance document titled "Cuidado con lo que le confIAs" (Be Careful What You Trust to AI), offering citizens practical advice for AI safety and responsible and conscious use of artificial intelligence tools. The publication coincides with International Data Protection Day, celebrated on 28 January.

According to a December 2025 survey by Spain's Centre for Sociological Research (CIS), nearly 63% of the Spanish population believes artificial intelligence will experience significant development over the next ten years. Recognising both AI's potential and its growing adoption, the AEPD emphasises the importance of understanding and preventing privacy risks associated with improper use of these technologies.

This guidance forms part of the AEPD's Strategic Plan 2025-2030, which promotes a culture of privacy and data protection amongst citizens and organisations whilst supporting technological innovation with appropriate safeguards.

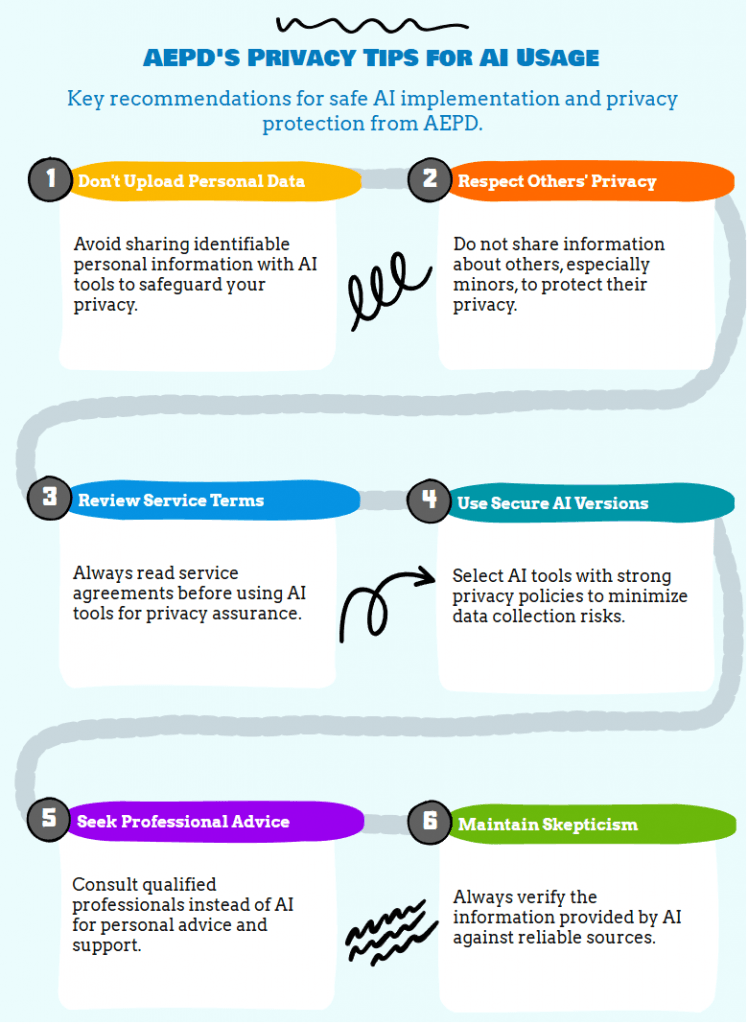

The AEPD's guidance provides ten essential recommendations for protecting privacy when using AI tools:

Be prudent and avoid sharing personal data such as your full name, address, phone number, DNI/NIE, or your image with AI systems. Such information could be leaked online or indexed by search engines unintentionally. When describing a situation to AI, use fictional cases and avoid details that could identify you.

Even if you don't provide personal identifiers, any information you share could be used to identify you for unintended purposes. Don't reveal sensitive information such as medical details, financial information, contractual terms, geolocation data, or information about visits to specific places.

Exercise caution when describing situations involving other people, particularly minors. Remove information that could identify them. Never use images of other people to generate new content. What begins as a joke could become a data protection violation or even a criminal offence.

If using AI at work, follow your organisation's information security policies. Specifically, don't include information that could reveal confidential data about your entity (such as contracts, reports, or strategies) or about its staff or clients.

Beyond the explicit content you include in prompts, other information is being sent to AI systems including cookies, IP addresses, tracking and usage data, metadata, device information, contact information, and location data. Select AI services that guarantee they don't collect more information than necessary to provide the service. Remember that nothing is truly free.

If you find yourself in a delicate personal situation, seek appropriate professionals. Artificial intelligence may appear to understand what you describe, but it doesn't truly comprehend or empathise with your personal situation and cannot offer qualified advice or accurate professional diagnosis.

AI tools can provide convincing-sounding answers on all types of topics, though they may be incorrect. Don't let AI decide for you, and always maintain a critical attitude towards its responses or advice. It's your responsibility to verify the accuracy of information provided by cross-referencing with other sources.

Children and adolescents may not be fully aware of the risks involved in sharing information with AI tools. Explain in simple terms the advantages and risks of their use, what data they shouldn't reveal, and foster their critical thinking.

When experimenting with AI, don't use your personal or professional email to create accounts. To protect your privacy and security, use different email addresses for different services and ensure these email addresses don't reflect your personal data.

Additionally, check whether the platform allows you to delete your conversations and do so frequently. Deleting history reduces risk in case of potential leaks that could have negative consequences for your privacy.

Consulting apparently innocuous matters such as advice for everyday situations could reveal an image (partial or complete) of what you like, what interests you, or what worries you, contributing to the creation of a profile that reveals more about you than you imagine. A profile reveals your strengths, preferences, and weaknesses.

The AEPD has previously warned about both visible and invisible risks of using third-party images in AI systems, even in apparently trivial or recreational contexts. The guidance specifically emphasises that images of other people (especially minors) should never be used to generate new content. Such practices could constitute data protection violations or even criminal offences.

To align with the AEPD's guidance and Spain's regulatory requirements under GDPR and LOPDGDD, we recommend the following immediate actions:

Every organisation must formally define which AI tools are permitted and explicitly ban the input of personal data into non-enterprise models. Your AUP should:

Before deploying any AI tool that processes personal data or performs profiling, a DPIA is mandatory under Article 35 of the GDPR. Your assessment must:

Integrate the AEPD's decalogue into employee onboarding and continuous training programmes to foster a culture of data hygiene. Effective training should:

IT departments should monitor network traffic to identify unauthorised use of AI platforms and block access to non-compliant tools if necessary. Shadow AI auditing involves:

For organisations already using AI tools, conduct an immediate review of vendor agreements to ensure:

The AEPD is not prohibiting AI use. Rather, it's promoting "safe, responsible, and conscious" use of AI with appropriate safeguards. Organisations can and should use AI tools, but they must select enterprise-grade solutions with robust data protection guarantees and implement proper governance frameworks.

Whilst the guidance itself doesn't create new legal obligations, it reflects how the AEPD interprets existing GDPR and LOPDGDD requirements in the context of AI tools. Organisations that ignore this guidance and subsequently experience data breaches or non-compliance issues are likely to face harsher regulatory scrutiny and potential enforcement action.

Consumer tools (free versions of ChatGPT, Gemini, Claude, etc.) typically train their models on user inputs, lack robust data processing agreements, don't offer data residency controls, and provide limited audit capabilities. Enterprise versions typically offer: contractual commitments not to train on your data, Data Processing Agreements compliant with GDPR Article 28, options for data residency within the EU, audit logs and usage monitoring, and BAA/NDA protections for sensitive information.

Yes. LOPDGDD Article 28 requires organisations to implement appropriate technical measures, which in the AI context means ensuring AI tools support data minimisation, purpose limitation, and the "bloqueo" (blocking) requirement for data that should be preserved but not actively processed. Additionally, organisations in the 16 sectors requiring mandatory DPOs under LOPDGDD Article 34 must ensure their DPO is consulted before deploying AI tools that process personal data.

First, don't create a climate of fear. Many employees use AI tools because they genuinely improve productivity. Instead: conduct a risk assessment to understand what data may have been shared, implement the AI AUP immediately, provide approved alternatives that meet legitimate business needs, offer amnesty for voluntary disclosure of past unauthorised use in exchange for completing compliance training, and document your remediation efforts in case of future regulatory inquiry.

Absolutely, and with heightened scrutiny. AI tools used for recruitment, performance evaluation, or workforce monitoring constitute automated decision-making under GDPR Article 22 and are subject to Spain's enhanced workplace protections under LOPDGDD Articles 87-89 and 91. These uses almost always require a DPIA, must be disclosed to employee representatives, and require clear transparency to affected employees.

Yes. GDPR and LOPDGDD apply to all organisations processing personal data, regardless of size. Whilst a sole trader might have simpler compliance needs than a multinational corporation, the fundamental requirements remain the same. The key is proportionality: your measures should be appropriate to your scale, but you cannot simply ignore these obligations because you're small.

The complete guidance document is available on the AEPD website: Recomendaciones para proteger la privacidad al usar herramientas de IA (PDF, Spanish language)

The publication's timing coincides with International Data Protection Day on 28 January. This commemoration dates back to 2006, when the Council of Europe established the date to celebrate the anniversary of the signing of Convention 108, the cornerstone of data protection in Europe. The observance has since expanded beyond Europe to become recognised internationally as Data Protection and Privacy Day.

Disclaimer: This article is provided for informational purposes only and does not constitute legal advice. No attorney-client or professional relationship is created by reading this content. For specific compliance guidance regarding AI tool usage in your organisation, consult a qualified Data Protection Officer or privacy lawyer familiar with Spanish data protection law.

Informational Purposes Only: The content provided by ANRO DIGITAL SOLUTIONS S.L.U. (including resolution summaries, infographics, and case analyses) is for educational and informational purposes only.

No Legal Advice: This information does not constitute legal advice, a formal legal opinion, or a substitute for professional legal counsel. The interpretation of data protection laws (including the GDPR, LOPDGDD, and AEPD resolutions) is subject to change and can vary based on specific facts and circumstances.

No Liability: ANRO DIGITAL SOLUTIONS S.L.U. assumes no responsibility or liability for any actions taken, or not taken, based on the information provided on this website. While we strive for accuracy, we make no guarantees regarding the completeness or timeliness of the information.

Consult a Professional: Data protection compliance is a complex legal requirement. You should not act upon this information without seeking advice from a qualified Data Protection Officer (DPO) or a specialist data protection lawyer licensed to practice in your jurisdiction.

Third-Party Links: Links to official AEPD documents are provided for convenience. We are not responsible for the content or availability of these external government portals.

Este resumen tiene carácter meramente informativo. Para más información, consulte nuestro Aviso Legal.